** Source available at http://mergeworkitems.codeplex.com/ **

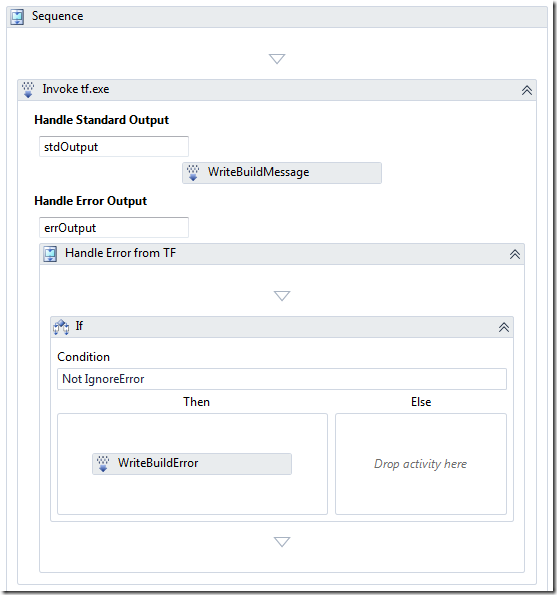

Half a year ago I wrote about about Merging Work Items with a custom check-in policy. The policy evaluated the pending changes and for all pending merges, it traversed the merge history to find the associated work items and let the user add them to the current changeset.

I promised to post the source to the check-in policy (and I’ve got a lot of requests for it), but I never did. This was primary for two reasons:

- The technical solution turned out to be a bit complicated. The problem was/is that it is not possible to modify the list of associated work items in the current pending changes using the API. This was a show stopper and the only way around it was to add another component that was executed on the server after the check-in that did the actual association. The information about the selected work items was temporarily stored in the comments of the changeset. This worked, but complicated the deployment.

- The feedback internally at Inmeta was that why should the developer be allowed to select which work items that should be associated? If a work item was associated with a changeset in a Main branch, it should always be associated with the merge changeset when merging to a Release branch. So the association should be done automatically.

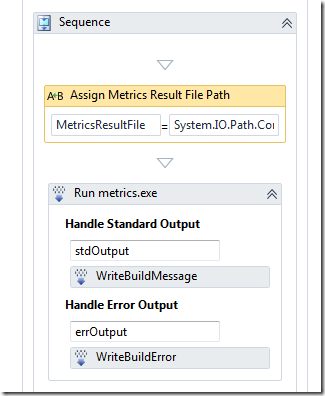

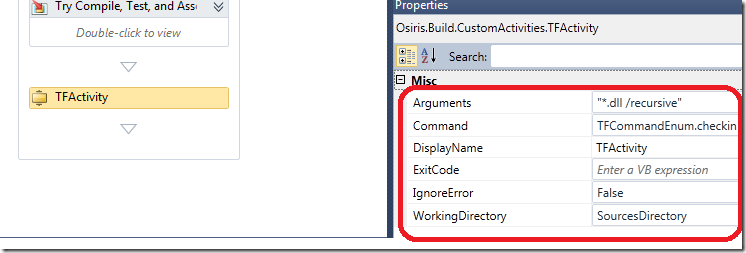

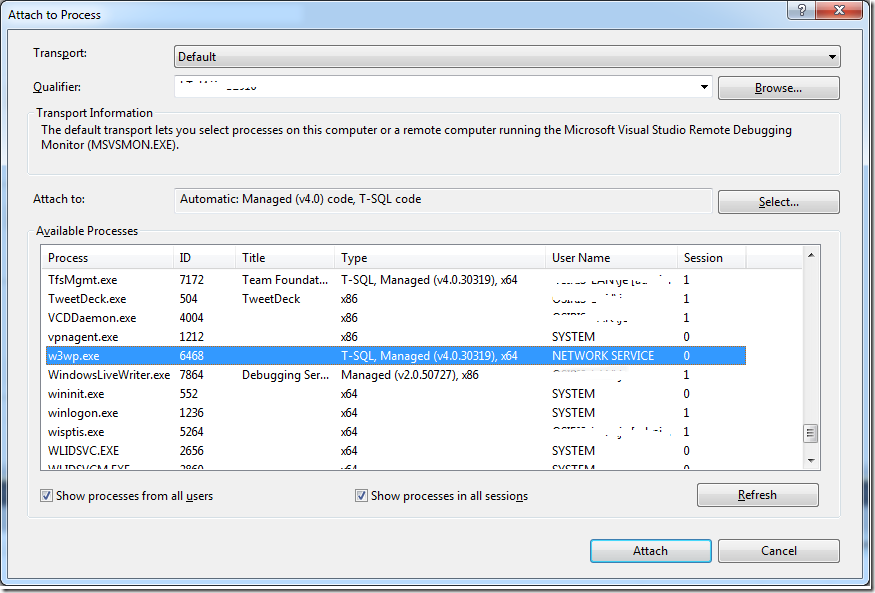

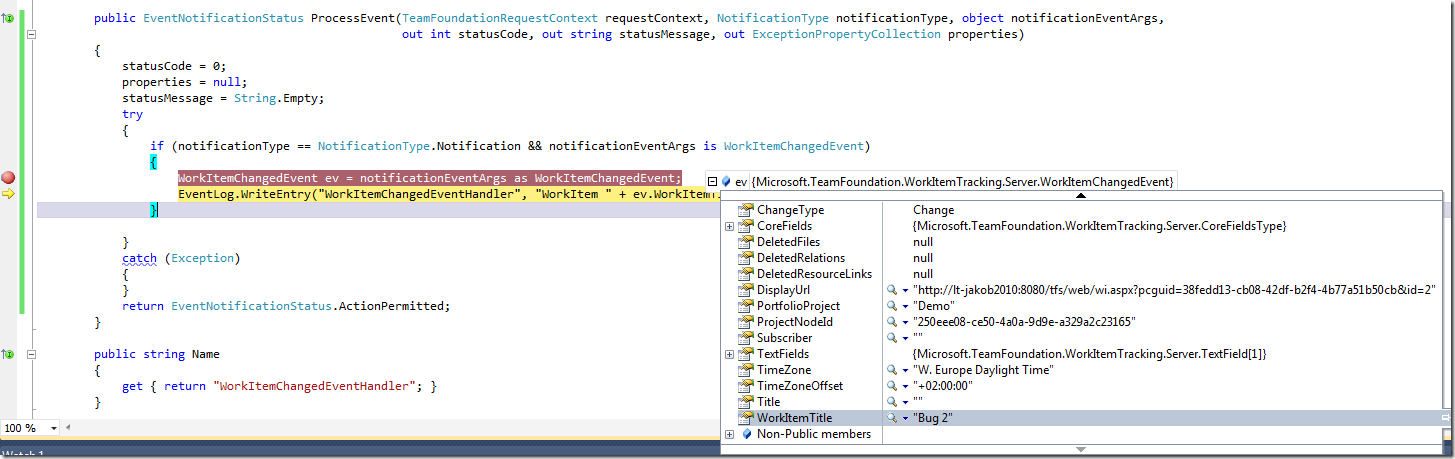

For these reasons I changed the implementation and converted the check-in policy to a TFS server side event handler instead (for more info on work with these event handlers, check out my post about them here: Server Side Event Handlers in TFS 2010)

By having the association done server side, the process is very smooth for the developer. When a changeset that contains merges is checked in, the event handler evaluates all merges and associates the work items. If a work item has already been associated by the developer, it is of course not associated again. Since it is implemented with a server side event handler, it runs instantly without the user ever really noticing it.

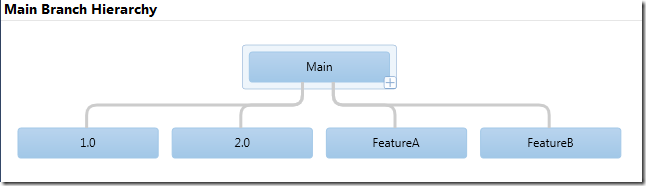

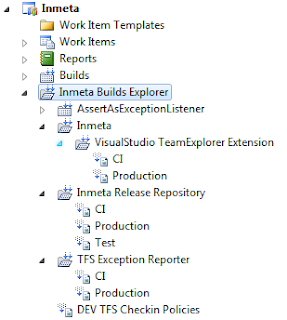

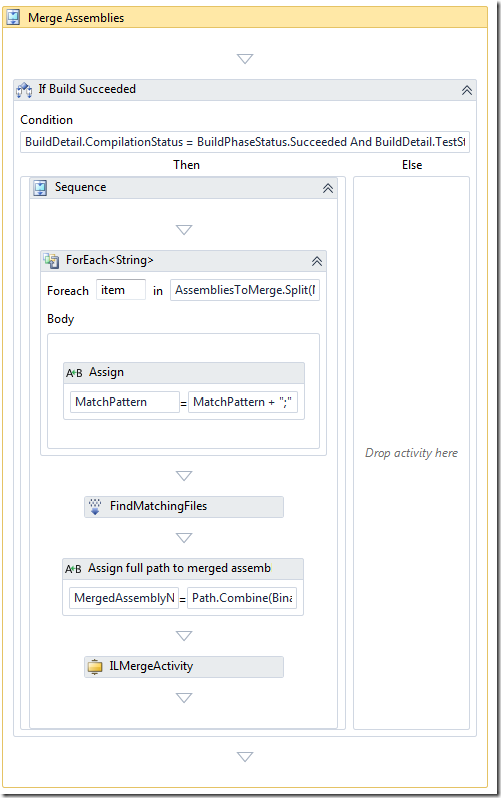

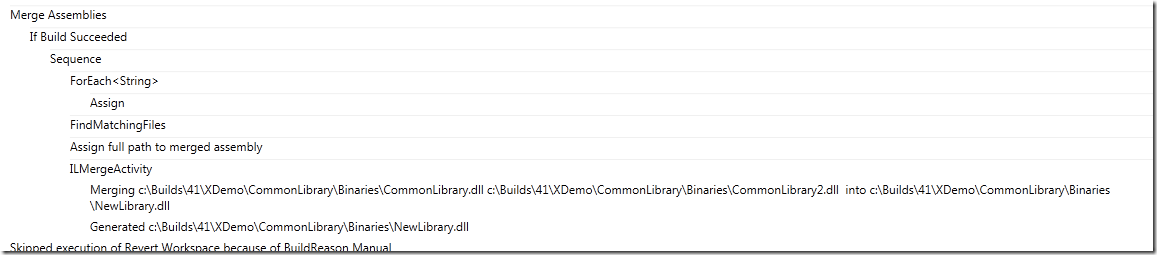

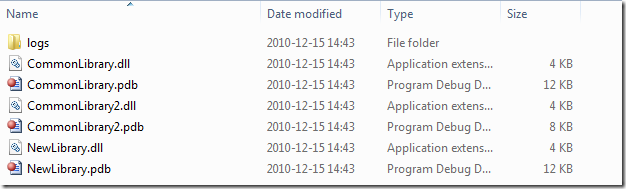

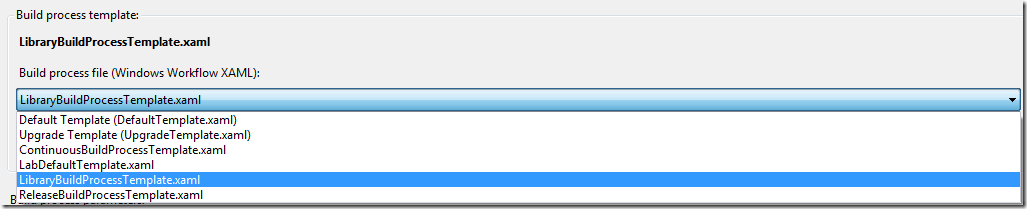

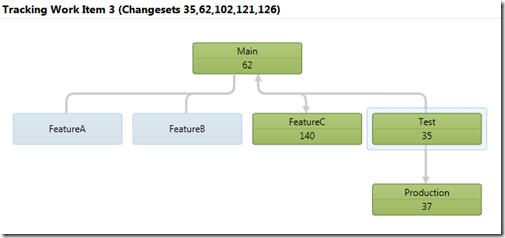

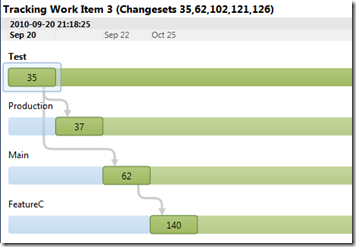

Let’s look at how this works. Lets say that we have the following branch hierarchy:

Now, one of our finest developers have both fixed a bug and added a new user story in the Main branch. That was two changesets, each changeset was associated with a corresponding work item:

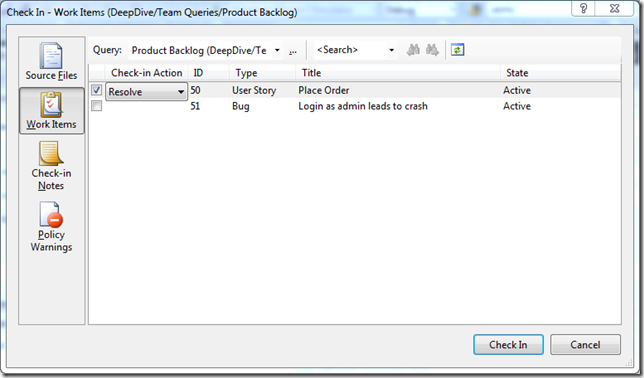

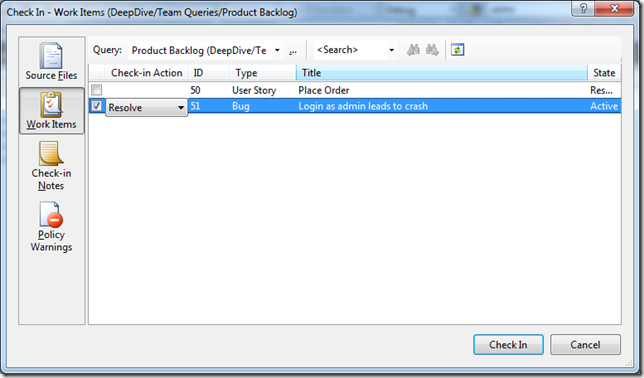

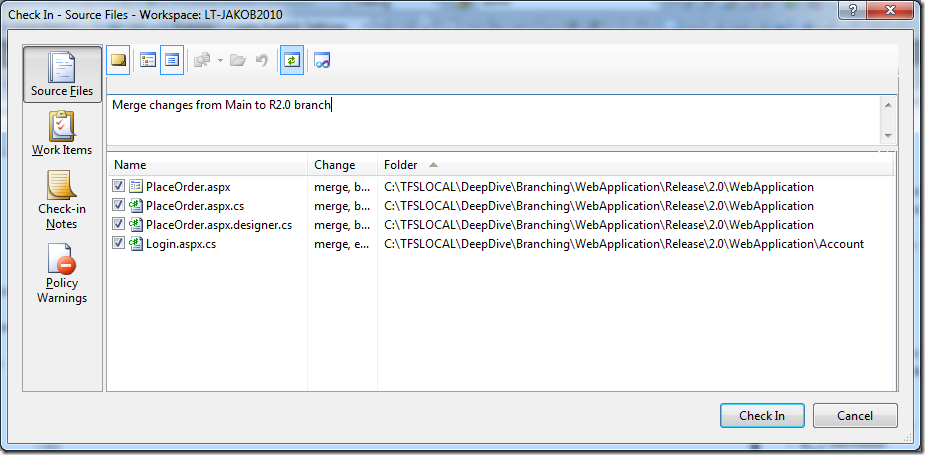

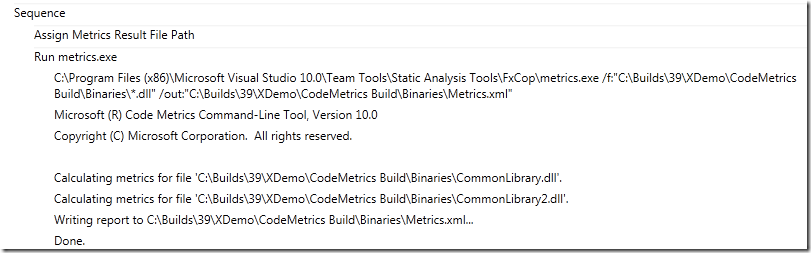

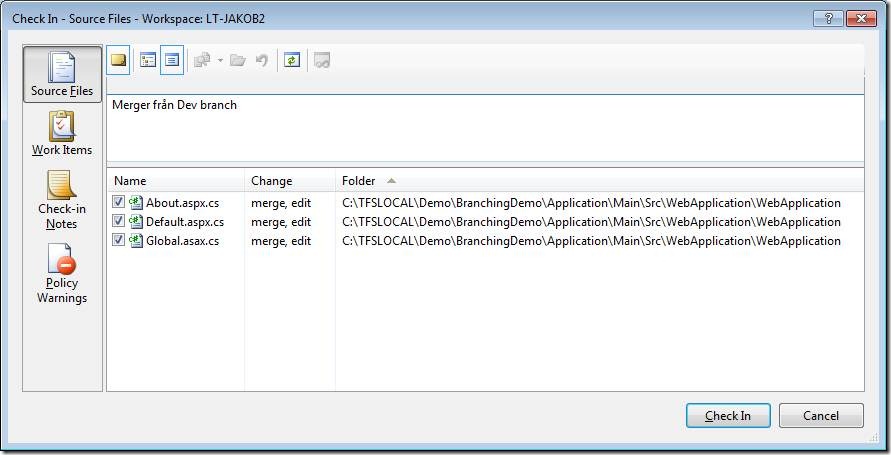

Now, we want to push these changes to the 2.0 Release branch. So the developer performs a merge from Main to the 2.0 branch.

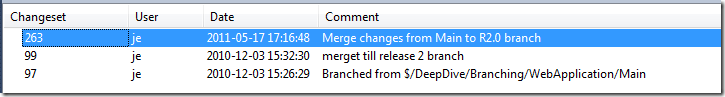

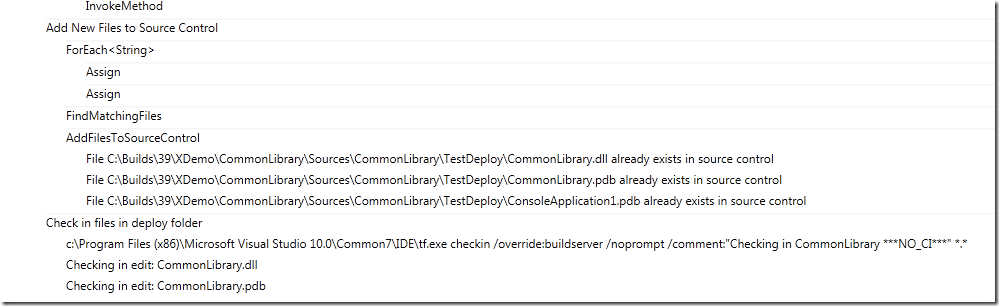

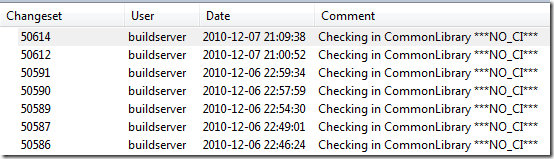

At this point, instead of manually adding the work items, the developer just checks in the changes. After this, lets take a look at the source history

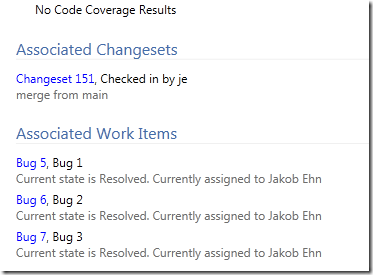

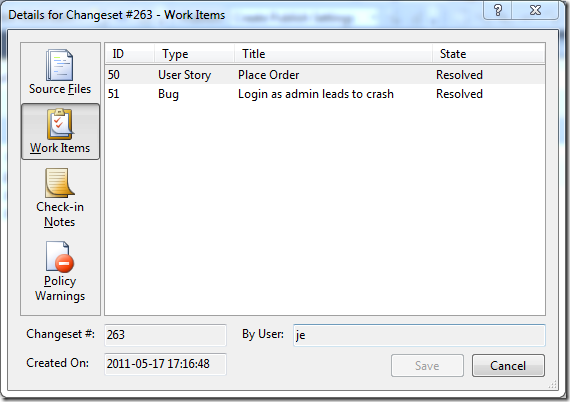

Double-clicking on the latest changeset, we can see the following information on the work items tab:

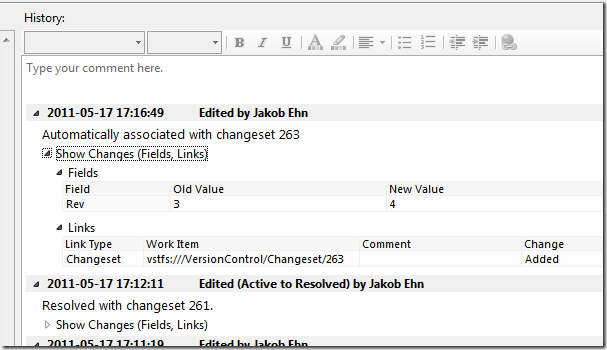

The work items associated with the original changesets that was merged to the R2.0 branch, have been associated with the new changeset. Also, double-clicking on one of the work items, we can see that the server event handler has added a link to the changeset for this work item:

Note the following:

- The changeset has been linked in the same way as when you associate a work item manually.

- The change was done by the developer account (myself in this sample), and not the TFS service account. This is because the event handler uses the new TFS Impersonation API to impersonate the user who committed the check-in.

- The history comment is a bit different than the usual (“Associated with changeset XXX”), just to highlight the reason for the change.

- If the changeset would contain both merges and other types of pending changes, the merges would still be traversed, the other changes are just ignored.

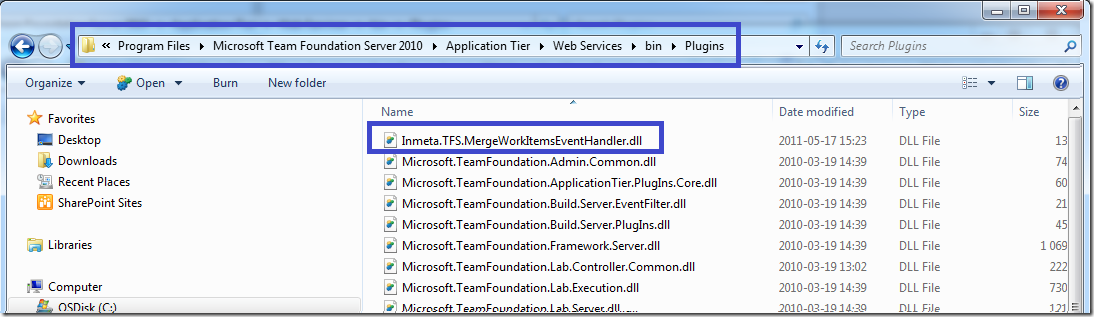

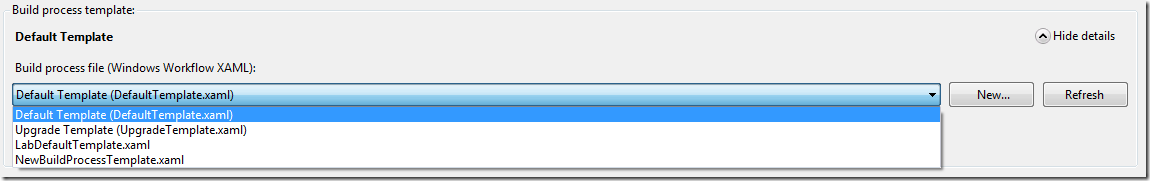

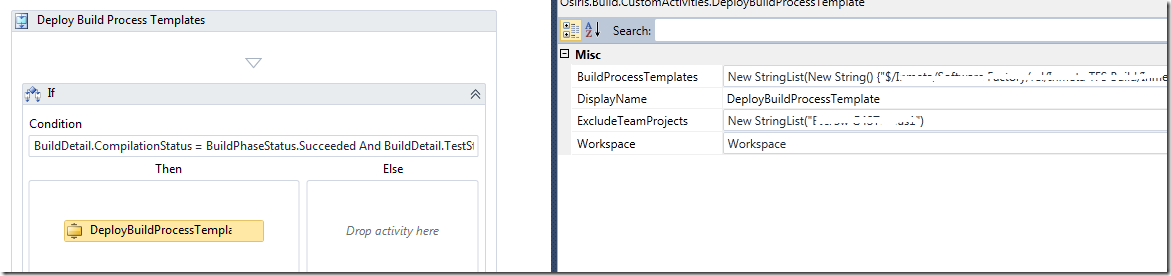

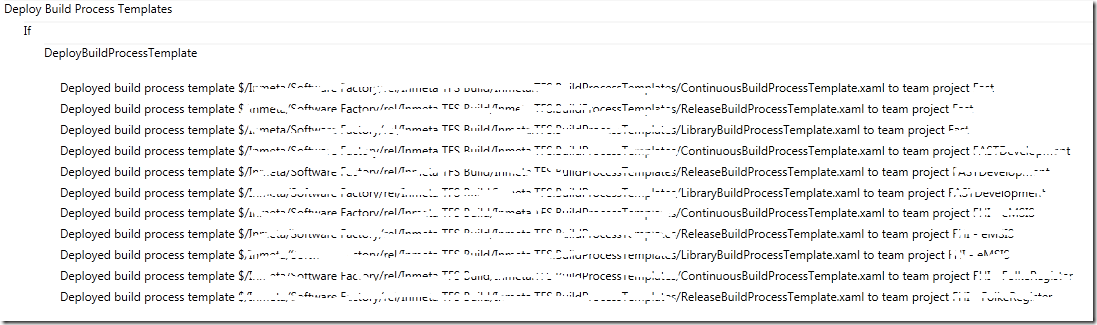

Deployment

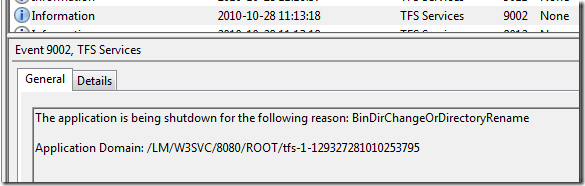

Deploying the server side event handler couldn’t be easier, just drop the Inmeta.TFS.MergeWorkItemsEventHandler.dll assembly into the plugin directory of the TFS AT server. This path is usually %PROGRAMFILES%Microsoft Team Foundation Server 12.0Application TierWeb ServicesbinPlugins. Note: This will cause TFS to recycle to load the new assembly, so in production you might want to schedule this to minimize problems for your users.

See my post more details on this.

Implementation

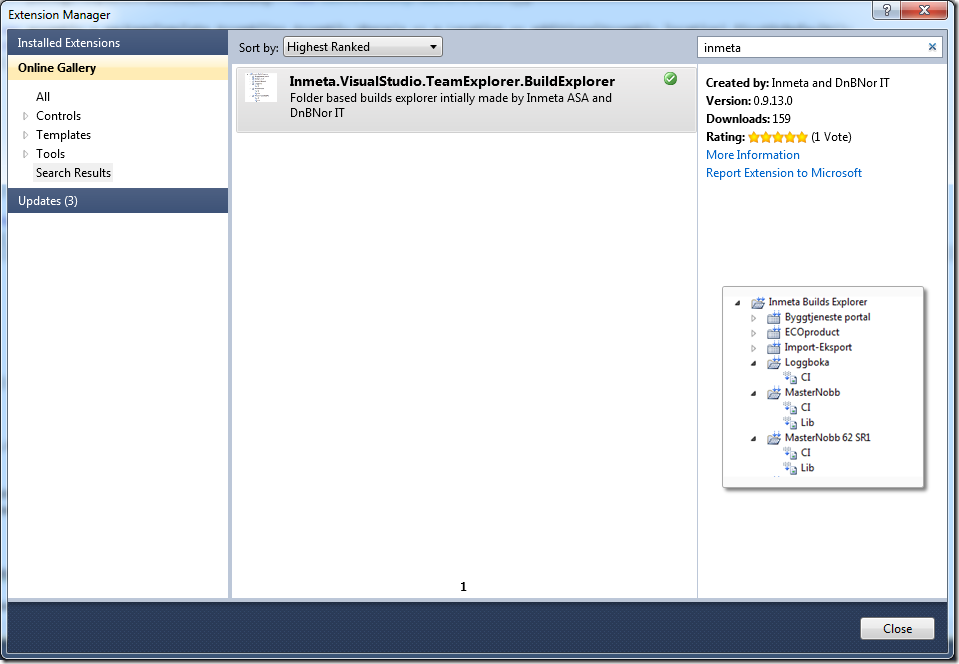

I have uploaded the source code to CodePlex at: http://mergeworkitems.codeplex.com/ so you can check out the details there. One thing that is worth mentioning is that I had to resort to the TFS Client API to access and modify the associated work items. The TFS Server Side object model is not very documented, and according to Grant Holiday (in this post) the server object mode for work item tracking is not very useful. So instead of going down that road, I used the client object model to do the work Not as efficient, but it gets the job done.

Note that the event handler hooks into the Notification decision point, which is executed asynchronously after the check-in has been done, and therefor it doesn’t have a negative impact on the overall check-in process.

Hope that you find the event handler useful, contact me either through this blog or via the CodePlex site if you have any questions and/or feature requests.

![clip_image002[5] clip_image002[5]](https://blogehn.azurewebsites.net/wp-content/uploads/2019/05/clip_image0025_thumb.jpg)